使用nssm将Kafka安装为Windows服务视频教程,工作中用到kafka这玩意儿在我们的古老的asp.net webform网站记录操作日志,但这玩意儿,不用docker的话,直接在windows服务器上安装部署将会非常的麻烦,因为你得使用黑不溜秋的命令行来运行它,而服务器一旦重启,又得重来一遍。

正常情况下,安装部署kafka,并且要正常的运行起来,需要经过如下的步骤:

- 第一:设置java环境变量 JAVA_HME

- 第二:修改zookeeper配置文件

- 第三:修改kafka的server.properties配置文件

- 第四:执行命令先后启动zookeeper(zookeeper-server-start.bat)和kafka(kafka-server-start.bat)

Windows服务器重启后还得重复上面第四个步骤。

以上操作一翻下来还是有点麻烦,那有没有不用docker的情况下,又将zookeeper和kafka安装为服务并且用最简单的方式部署呢?答案是有的,最简单的安装部署方式就是执行一个批处理文件就完事了,配置环境变量也自动搞定,但还是需要提前修改一下kafka的配置文件,主要是IP地址信息和监听端口等。

本着这个目的,逐将相关的环境软件放到一起,如下图所示:

因此你需要提前下载好java环境,我这里使用的是 jdk1.8.0_20,kafka,nssm,zookeeper等安装包,详细的版本信息上图中已经可以看出。

什么是nssm?

NSSM是一个服务封装程序,它可以将普通exe、bat、以及任何程序封装成服务,使之像windows服务一样运行,就像一个服务壳一样,将你的程序包在NSSM里面。

官网解释如下:

NSSM – the Non-Sucking Service Manager

nssm is a service helper which doesn’t suck. srvany and other service helper programs suck because they don’t handle failure of the application running as a service. If you use such a program you may see a service listed as started when in fact the application has died. nssm monitors the running service and will restart it if it dies. With nssm you know that if a service says it’s running, it really is. Alternatively, if your application is well-behaved you can configure nssm to absolve all responsibility for restarting it and let Windows take care of recovery actions.

nssm logs its progress to the system Event Log so you can get some idea of why an application isn’t behaving as it should.

nssm also features a graphical service installation and removal facility. Prior to version 2.19 it did suck. Now it’s quite a bit better.

https://nssm.cc/

本站之前也发布过类似的文章,当时只是将kafka安装为windows服务,但还是不方便,今天分享的操作将会更加的方便。

nssm将Kafka安装为Windows服务视频教程

在开始之前,你可以先看看这个视频,就是在服务器上的操作,是不是很简单呢?视频中演示的就是配置一下kafka的server.properties文件,而java环境变量自动通过批处理给你创建好了。

nssm将Kafka安装为Windows服务视频教程

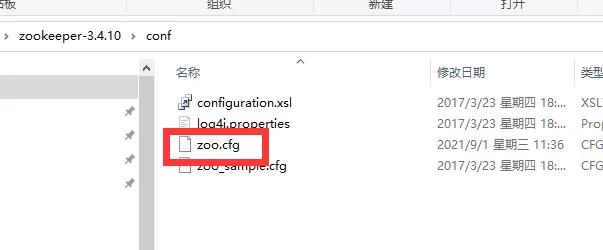

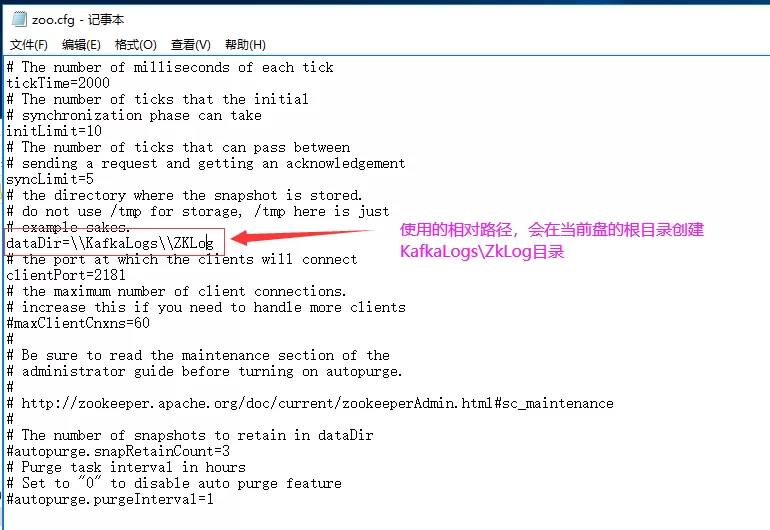

修改zookeeper配置文件

找到zookeeperàzoo.cfg 文件,打开该文件修改dataDir节点,指定数据保存目录。

修改如下:

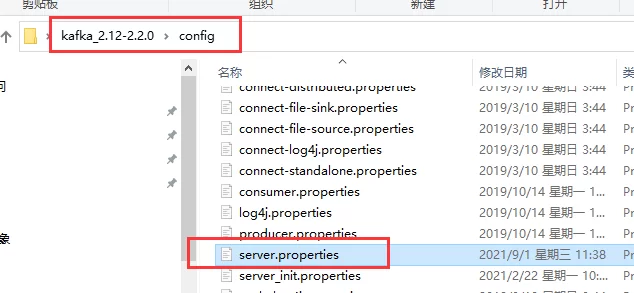

修改kafka配置文件

打开kafka目录,找到如下图所示的server.properties文件进行修改。

详细的配置如下:

# Licensed to the Apache Software Foundation (ASF) under one or more # contributor license agreements. See the NOTICE file distributed with # this work for additional information regarding copyright ownership. # The ASF licenses this file to You under the Apache License, Version 2.0 # (the “License”); you may not use this file except in compliance with # the License. You may obtain a copy of the License at # # http://www.apache.org/licenses/LICENSE-2.0 # # Unless required by applicable law or agreed to in writing, software # distributed under the License is distributed on an “AS IS” BASIS, # WITHOUT WARRANTIES OR CONDITIONS OF ANY KIND, either express or implied. # See the License for the specific language governing permissions and # limitations under the License. # see kafka.server.KafkaConfig for additional details and defaults ############################# Server Basics ############################# # The id of the broker. This must be set to a unique integer for each broker. broker.id=0 ############################# Socket Server Settings ############################# # The address the socket server listens on. It will get the value returned from # java.net.InetAddress.getCanonicalHostName() if not configured. # FORMAT: # listeners = listener_name://host_name:port # EXAMPLE: # listeners = PLAINTEXT://your.host.name:9092 listeners=PLAINTEXT://10.10.10.225:9092 host.name=10.10.10.225 port=9092 # Hostname and port the broker will advertise to producers and consumers. If not set, # it uses the value for “listeners” if configured. Otherwise, it will use the value # returned from java.net.InetAddress.getCanonicalHostName(). advertised.listeners=PLAINTEXT://10.10.10.225:9092 # Maps listener names to security protocols, the default is for them to be the same. See the config documentation for more details #listener.security.protocol.map=PLAINTEXT:PLAINTEXT,SSL:SSL,SASL_PLAINTEXT:SASL_PLAINTEXT,SASL_SSL:SASL_SSL # The number of threads that the server uses for receiving requests from the network and sending responses to the network num.network.threads=3 # The number of threads that the server uses for processing requests, which may include disk I/O num.io.threads=8 # The send buffer (SO_SNDBUF) used by the socket server socket.send.buffer.bytes=102400 # The receive buffer (SO_RCVBUF) used by the socket server socket.receive.buffer.bytes=102400 # The maximum size of a request that the socket server will accept (protection against OOM) socket.request.max.bytes=104857600 ############################# Log Basics ############################# # A comma separated list of directories under which to store log files log.dirs=\\KafkaLogs\\KafKaLog # The default number of log partitions per topic. More partitions allow greater # parallelism for consumption, but this will also result in more files across # the brokers. num.partitions=1 # The number of threads per data directory to be used for log recovery at startup and flushing at shutdown. # This value is recommended to be increased for installations with data dirs located in RAID array. num.recovery.threads.per.data.dir=1 ############################# Internal Topic Settings ############################# # The replication factor for the group metadata internal topics “__consumer_offsets” and “__transaction_state” # For anything other than development testing, a value greater than 1 is recommended for to ensure availability such as 3. offsets.topic.replication.factor=1 transaction.state.log.replication.factor=1 transaction.state.log.min.isr=1 ############################# Log Flush Policy ############################# # Messages are immediately written to the filesystem but by default we only fsync() to sync # the OS cache lazily. The following configurations control the flush of data to disk. # There are a few important trade-offs here: # 1. Durability: Unflushed data may be lost if you are not using replication. # 2. Latency: Very large flush intervals may lead to latency spikes when the flush does occur as there will be a lot of data to flush. # 3. Throughput: The flush is generally the most expensive operation, and a small flush interval may lead to excessive seeks. # The settings below allow one to configure the flush policy to flush data after a period of time or # every N messages (or both). This can be done globally and overridden on a per-topic basis. # The number of messages to accept before forcing a flush of data to disk #log.flush.interval.messages=10000 # The maximum amount of time a message can sit in a log before we force a flush #log.flush.interval.ms=1000 ############################# Log Retention Policy ############################# # The following configurations control the disposal of log segments. The policy can # be set to delete segments after a period of time, or after a given size has accumulated. # A segment will be deleted whenever *either* of these criteria are met. Deletion always happens # from the end of the log. # The minimum age of a log file to be eligible for deletion due to age log.retention.hours=168 # A size-based retention policy for logs. Segments are pruned from the log unless the remaining # segments drop below log.retention.bytes. Functions independently of log.retention.hours. #log.retention.bytes=1073741824 log.cleaner.enable=false # The maximum size of a log segment file. When this size is reached a new log segment will be created. log.segment.bytes=1073741824 # The interval at which log segments are checked to see if they can be deleted according # to the retention policies log.retention.check.interval.ms=300000 ############################# Zookeeper ############################# # Zookeeper connection string (see zookeeper docs for details). # This is a comma separated host:port pairs, each corresponding to a zk # server. e.g. “127.0.0.1:3000,127.0.0.1:3001,127.0.0.1:3002”. # You can also append an optional chroot string to the urls to specify the # root directory for all kafka znodes. zookeeper.connect=localhost:2181 # Timeout in ms for connecting to zookeeper zookeeper.connection.timeout.ms=6000 ############################# Group Coordinator Settings ############################# # The following configuration specifies the time, in milliseconds, that the GroupCoordinator will delay the initial consumer rebalance. # The rebalance will be further delayed by the value of group.initial.rebalance.delay.ms as new members join the group, up to a maximum of max.poll.interval.ms. # The default value for this is 3 seconds. # We override this to 0 here as it makes for a better out-of-the-box experience for development and testing. # However, in production environments the default value of 3 seconds is more suitable as this will help to avoid unnecessary, and potentially expensive, rebalances during application startup. group.initial.rebalance.delay.ms=0

编写install.bat文件

@echo off %~d0 cd ..\..\ ::添加环境变量JAVA_HOME @echo 添加java环境变量 set regpath=HKEY_LOCAL_MACHINE\SYSTEM\CurrentControlSet\Control\Session Manager\Environment set evname=JAVA_HOME set javapath=%cd%\jdk1.8.0_202 reg add “%regpath%” /v %evname% /d %javapath% /f @echo 安装zookeeper %cd%\nssm-2.24\win64\nssm install zookeeper %cd%\zookeeper-3.4.10\bin\zkServer.cmd @echo 安装kafka %cd%\nssm-2.24\win64\nssm install kafka %cd%\kafka_2.12-2.2.0\bin\windows\kafka-server-start.bat %cd%\kafka_2.12-2.2.0\config\server.properties @echo 启动zookeeper服务 %cd%\nssm-2.24\win64\nssm start zookeeper @echo 启动kafka服务 %cd%\nssm-2.24\win64\nssm start kafka pause

nssm将Kafka安装为Windows服务

编写uninstall.bat

@echo off @echo 卸载zookeeper %~d0 cd ..\..\ %cd%\nssm-2.24\win64\nssm remove zookeeper confirm @echo 卸载kafka %cd%\nssm-2.24\win64\nssm remove kafka confirm pause

nssm将Kafka安装为Windows服务

nssm常用命令

- nssm install servername //创建servername服务

- nssm start servername //启动服务

- nssm stop servername //暂停服务

- nssm restart servername //重新启动服务

- nssm remove servername //删除创建的servername服务

总结

NSSM可以将控制台程序一样的安装为服务,再配合定时任务,可以做好多事情。

【江湖人士】(jhrs.com) 投稿作者:IT菜鸟,不代表江湖人士立场,如若转载,请注明出处:https://jhrs.com/2021/43592.html

扫码加入电报群,让你获得国外网赚一手信息。